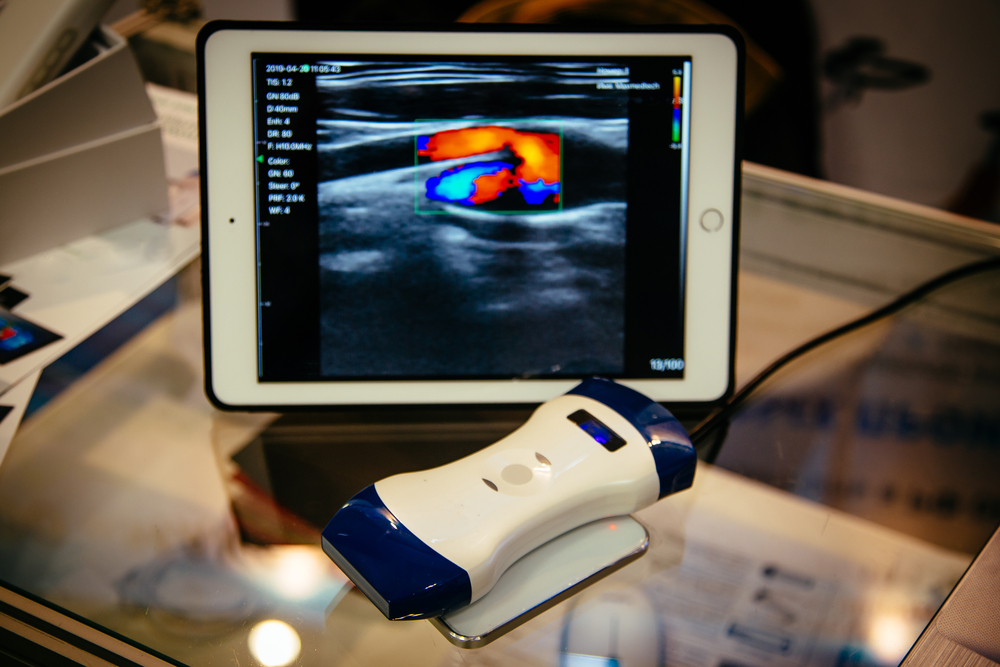

DARPA is taking a stab at improving portable ultra-sound devices with AI to respond to injuries in real-time directly on the battlefield.

While Point-Of-Care Ultrasound (POCUS) devices are already on the market, the Defense Advanced Research Projects Agency (DARPA) argues that current devices are too expensive and fall short on interpreting key datasets.

Now, the Pentagon’s research funding arm is looking to develop an AI prototype that can better make sense and use of POCUS data, with the launch of the POCUS AI research program and opportunity on Monday.

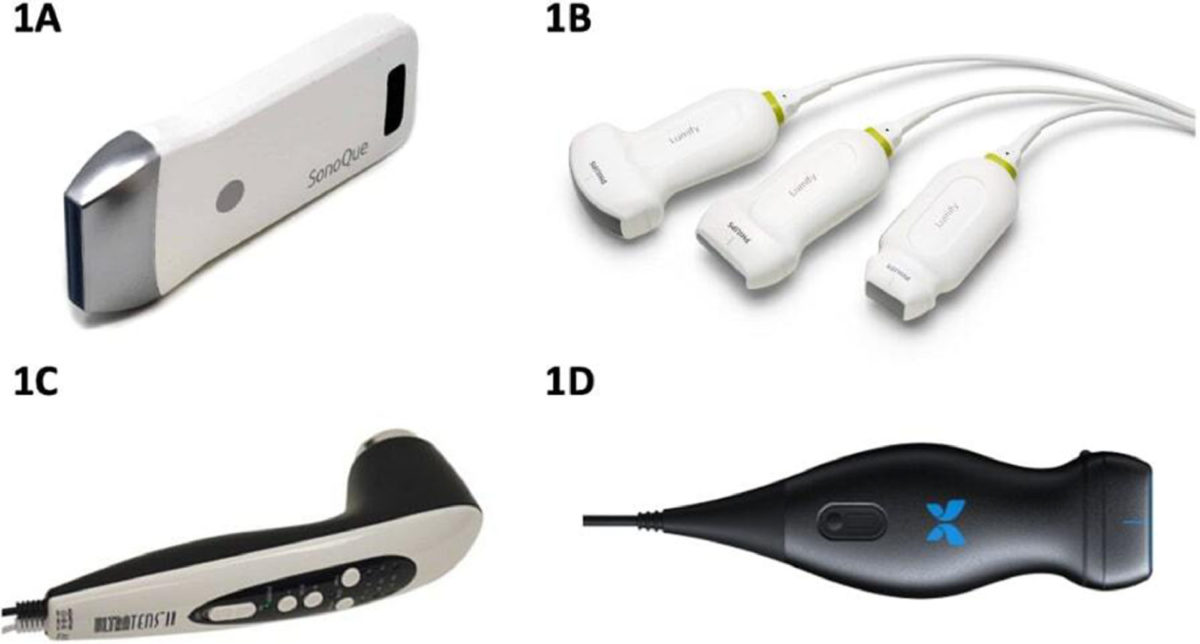

Commercially available portable ultrasound pocket probes. (1A) SonoQue portable, wireless, linear probe (SonoQue, Yorba Linda, CA). (1B) Lumify curved, linear, and phased-array probes (Philips, Amsterdam, Netherlands). (1C) UltraTENS II portable ultrasound probe (TENS Pros, St Louis, MO). (1D) Butterfly iQ (Butterfly Network, Inc, Guilford, CT). Source: Journal of Cardiothoracic and Vascular Anesthesia

The challenge, according to DARPA, is that the few AI tools that have been developed to date have required “hundreds of POCUS patient datasets to be collected and manually annotated by expert clinicians for each medical condition.”

Therefore, the POCUS AI program “will address this challenge through advances in AI techniques that use limited training data and incorporate prior knowledge, including ultrasound domain expertise, to enhance utilization of POCUS in austere environments.”

So, the idea is to develop algorithms that can assist highly-trained medical professionals in carrying-out accurate diagnostic and intervention procedures for certain injuries while taking data from patient histories and known medical conditions into account.

Specifically, the algorithms will have to demonstrate capabilities for not just one, but all four of the following Pentagon-relevant battlefield medical needs:

- Detection of pneumothorax (collapsed lung)

- Measurement of optic nerve sheath diameter (which can be used to assess intracranial nerve pressure)

- Nerve block guidance (which can be used to provide accurate placement of local anaesthetic)

- Verification of endotracheal intubation (to make sure a tube has been accurately placed)

The research program will consist of two phases.

Phase 1 will evaluate the feasibility of developing AI models for POCUS classification from very limited model training data, and Phase 2 will demonstrate proof of concept that such models can be reused to serve additional POCUS applications.

The AI models must report results within five seconds on a mobile device by the end of the first phase.

In the end, the goal is to develop a prototype AI model capable of providing real-time interpretation of POCUS data for multiple applications, demonstrating the feasibility of automated interpretation of POCUS using limited model training data and providing a foundation for future work.

Are we getting closer to a Star Trek-like medical tricorder?

In 2012, Qualcomm launched “The Qualcomm Tricorder XPRIZE,” challenging researchers to develop a Star Trek-like medical device that would be able to accurately diagnose a set of 13 medical conditions independent of a healthcare professional or facility, capture and measure five vital signs, and provide a compelling consumer experience.

More than five years passed before a winner was declared in 2017.

A small startup initially consisting of seven people called Basil Leaf Technologies (XPRIZE Team Name: Final Frontier Medical Devices) took home the first prize, edging out Dynamical Biomarkers Group, which had over 50 doctors, scientist, and programmers, along with the backing of HTC and the Taiwanese government.

One of they key takeaways from the challenge was that it wasn’t enough to leverage AI to help visualize what was going on via signal and image analyses, but that it was more important for the AI to think like a doctor in making diagnoses and interpreting the data.

“I think we did it in the reverse direction of most teams that may have started with the cool sensors and then built the AI afterward,” Final Frontier team leader Basil Harris told MobiHealthNews.

He added that his winning team approached the challenge “from the AI perspective of ‘What do I do in the ER to make a diagnosis?’”

Chung-Kang Peng, leader of the second-place team, agreed that this was the correct approach.

“We initially naively thought it was purely a technology problem,” said Peng.

“We developed algorithms for image analysis, for signal analysis. Like Basil said it’s a completely different approach.

“At the end, we reached pretty much the same conclusion — the clinical AI needed to help you do the screening, diagnosis, prescreening. Those are actually more important,” he added.

Here we are four years later, and DARPA is stressing the same importance on developing algorithms that can aid professionals in accurately making diagnoses and carrying out medical procedures.

Whereas modern adaptations of the fictional Star Trek tricorder can be waved like a magic wand to to detect and treat almost any ailment instantaneously, real-world devices today have a quite a ways to go.

However, if you look at the core functions of the original version of the tricorder — scanning the body, recording the data, and computing the results — then from that point of view, we’re looking at a fairly stable, albeit basic, foundation with many future applications ahead, with ultrasound being just one component.

In fact, just a few short months ago on December 7, 2020, Cold Spring Harbor Laboratory scientists announced they had developed the “world’s first DNA tricorder in your pocket.”

By pairing an iPhone with a handheld DNA sequencer, users are able to create a mobile genetics laboratory, reminiscent of the “tricorder” featured in Star Trek, according to the announcement.

Next week, DARPA Program Manager Dr. Paul Sheehan will be holding an Ask Me Anything event on February 8 for members of the PolyPlexus community to learn more about the POCUS AI program.

PolyPlexus is a social network launched by DARPA in March, 2019, and serves as an online platform for its research and development incubators.

DARPA takes another step towards spinal cord injury restoration

Envisioning the bioengineered soldier of the future through DARPA research programs