Governments across the world are implementing policies and practices to control the fake news monster of social media. However, who decides what is fake news? Why don’t we decide for ourselves?

Canadian Prime Minister Justin Trudeau announced a digital charter to impose “meaningful financial consequences” on tech companies if they don’t curb misinformation on their platforms.

During an online extremism summit, world leaders, like Trudeau, French President Emmanuel Macron and New Zealand Prime Minister Jacinda Ardern, signed the ‘Christchurch Call for Action’, a non-binding agreement that involves governments and private companies to curb online extremism through regulations and self-policing.

Tech giants, Facebook, Microsoft, Twitter, Google, and Amazon have signed the agreement.

Singapore has passed an anti-fake news law that empowers authorities to police online platforms and even private chat groups. According to the new law, the government can order platforms to remove, what it thinks, are false statements that are “against the public interest”, and to post corrections.

After the devastating suicide bombings on Easter Sunday, Sri Lanka banned social media for nine days as a step against spreading damaging hate messages. This included Facebook, WhatApp, Instagram, YouTube, Viber, and Snapchat.

The Sri Lankan government was praised for taking a tough stand against panicked misinformation, but, at the same time, many Sri Lankans were forced to be cut off from communicating with relatives with only a government-friendly media for information.

While such initiatives aim to counter online extremism and misinformation, the problem does not wholly get solved. In fact, this either invites third-party bias or curbs freedom.

Fact-Checker Bias

Two years ago, Facebook said it would rely on third-party fact-checkers to curb fake news. Turns out, the consolidation of the third party consisted of a handful of media organizations with their own political leanings.

The prompt to reduce fake news from Facebook’s news feed revealed that it removes financial incentive from spammers, and not from sensational stories with the potential to sway elections.

If fact-checkers are employed, won’t they be puppets in the hands of their employers?

Hence, government-employed fact-checkers will be obligated to the government to allow profitable content and curb the opposite.

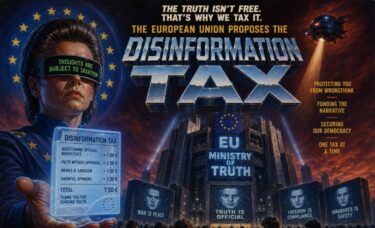

This sets up a person or an organization to be the moral authority on what is real or fake, offensive or friendly — a type of dystopian Ministry of Truth.

And in the process, what happens to free speech?

Self-Checking News

As users of platforms like Facebook, WhatsApp, YouTube, etc., we often do not discern information for ourselves. Our blind trust in information outlets and platforms often lead us to form beliefs without researching all sides of a story.

A Canadian Journalism Foundation survey has found that about 40% of Canadians have difficulty identifying a fake news story from a fact-based one.

In a survey of more than 74,000 people in 37 markets across the globe, the 2018 Digital News Report found that more than half (51%) of the respondents trust the news media they themselves use most of the time.

In a news analysis done by BuzzFeed in election year 2016, the most popular fake articles got more Facebook shares, reactions, and comments than the actual high-quality news.

We live in our cocoons of comfortable beliefs — a veritable echo chamber alongside those of similar beliefs while seldom stepping out of that comfort zone and losing our objectivity in the process.

Social media platforms do not want this objectivity in us either, because they want us to connect with other like-minded individuals, since continuing to do that keeps us on their platforms.

Should we now blindly trust a government to tell us what to believe?