If a technology promotes social bias, should we keep it around? All technical developments reflect the society in which it is developed and implemented. Do we hold the makers of the tech responsible when they create something that encourages a flawed social structure?

Google has refused to remove the controversial app in Saudi Arabia, Absher, which allows men to keep track of women. After reviewing it, Google reasons, the app does not violate any Play Store policies.

Google was asked to remove the app from its digital store by the office of US representative Jackie Speier and other members of the US Congress. According to this group, if Apple and Google continue to host the app, they are “accomplices in the oppression of Saudi Arabian women.”

What is Absher?

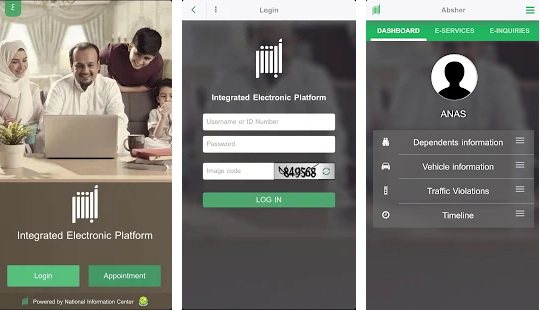

Absher App on Google Play

Absher is a Saudi Arabian government service app that provides services, such as applying for a passport renewal, national ID card, or driver’s license, with a few clicks. Among its many functionalities, it also lets male guardians in Saudi Arabia to grant or withdraw travel permission for women.

They can even be informed via SMS when a woman uses her passport to go somewhere, or if she leaves a specific area. It also allows citizens to complete bureaucratic tasks like giving women permission to seek a job, a legal permission that is mandatory in Saudi Arabia.

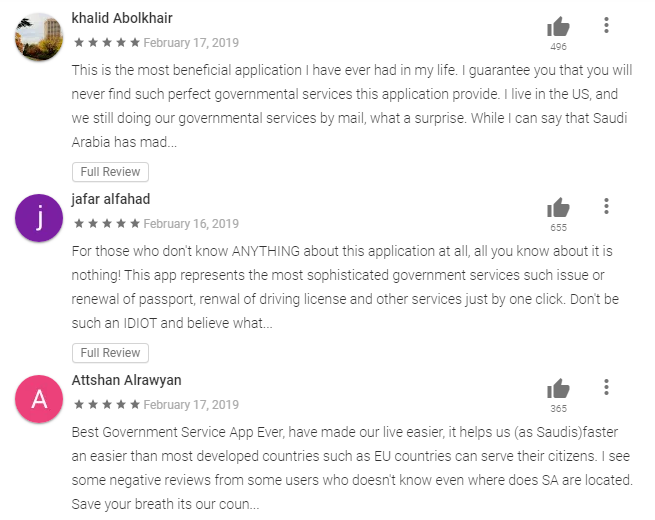

The app has been available since 2015 and is a success with more than a million downloads and glowing reviews by the men who use it.

It is no wonder that Google has not taken it down yet then. However, should we be called naive to expect a super successful company like Google to choose social responsibility over profits?

Practical View of the App

Absher App Reviews on Google Play

Some are calling the outrage surrounding this app ‘counterproductive’, according to The Guardian, and they reason that removing it might further restrict Saudi Arabian women. The argument is that, with the app, male guardians cannot use the excuse of not being able to grant travel because of a burdensome process.

In effect, the app can be used to navigate the male guardianship law — in which “a woman’s father, brother, husband or son has the authority to make critical decisions on her behalf,” according to the BBC. If a man wishes, he can take off all restrictions on a woman’s travel, giving her permission to travel without a guardian or guardian’s approval in every instance.

Read More: Is it OK for corporations to piggyback social causes to expand?

While this could be seeing the app from a more practical viewpoint, it also reveals the deep seated problems that a minority population keeps facing. It is natural for women to find a way around a problem that doesn’t seem to be within their hands, but the problem here is of a grander scale. This app is a symbol of the existing gender gap, and by letting it continue, efficient as it is, we are supporting that gap in society.

After all, this kind of tech is making it convenient for men to track women from the comfort of a smartphone. Without this app, they will be inconvenienced. Hence, by not removing the app, Google is approving the practice of monitoring women.

Not Google’s First Time

This is not the first time that Google has been bashed for putting apps out there that target a community. An app called Living Hope Ministries that promotes LGBTQ conversion therapy shocked people last year. While Apple removed the app in December, Google Play Store still has it.

Read More: Senators look to grill Google CEO over undisclosed microphone on Nest

These apps appear to be in conflict with Google Play policy, which says, “We don’t allow apps that promote violence, or incite hatred against individuals or groups based on race or ethnic origin, religion, disability, age, nationality, veteran status, sexual orientation, gender, gender identity, or any other characteristic that is associated with systemic discrimination or marginalization.”

The description of Absher on Google Play Store says “Absher has been designed and developed with special consideration to security and privacy of user’s data and communication. So, you can safely browse your profile or your family members, or labors working for you, and perform a wide range of eServices online.”

Is Tech Neutral?

According to Kranzberg’s first law, “Technology is neither good nor bad; nor is it neutral,” (Dr. Melvin Kranzberg is a professor of the history of technology at the Georgia Institute of Technology).

Focusing on “nor is it neutral”, technology adapts to the society that interacts with it the most. Three years ago, Microsoft launched an AI chatbot, Tay, which was designed to learn Millennial vernacular and jargon from Twitter interactions. By the end of the first day, the chatbot started spewing racist hatred.

Read More: How Microsoft’s AI chatbot ‘Tay’ turned incestuous Nazi accurately reflects our society

A computer develops a dictionary by association. According to The Guardian, researchers interacting with Word2vec, a word-embedding model, found that to a question, “Man is to woman as computer programmer is to ?”, the model answered “homemaker”. While the response is based on facts, Word2vec becomes an instrument that promotes gender gap by supporting the biased view that only men make computer programmers or only women make homemakers.

Which brings us to Kranzber’s fourth law, “Technologically ‘sweet’ solutions do not always triumph over political and social forces.” And the sixth law, “the function of the technology is its use by human beings–and sometimes, alas, its abuse and misuse.”

In the case of Absher, the app’s efficiency, while empowering citizens with quick access to services, will be upholding the society’s weakness of keeping women in check. Also, it can easily become an instrument of abuse. A woman trying to escape from domestic abuse can be tracked by her abuser with a few clicks. He can also ensure she never escapes or seeks asylum.

Tech and Abuse

While Saudi Arabia is being criticized for its archaic laws towards women, the rest of world is not free from its abuse and misuse of tech to harass women and other minority groups. A page in any social media app reflects the sentiments groups of people hold against other groups. The sentiments have always been there, technology is just easing the way for spreading them wide and far and fast.

There is stalking on the internet, slut shaming in social media, cyber bullying on smart devices. Highly developed IoT and wearable tech can be used to track a person’s movements. A SmartSafe survey revealed that 97% of domestic violence services organisations reported that abuse survivors were harassed via tech.

Read More: Big tech employees voicing ethical concerns echo warnings from history: Op-ed

While tech is neither good nor bad, its non-neutrality indicates that the problems with tech emanate from political dogmas. Shouldn’t we, then, harbour expectations from the Facebooks, Googles, and Apples of the world to create tech that transcends the non-neutral and checks social bias? After all, they have the resources.

The question is, do they have the will?