The fact that AI can behave unexpectedly isn’t preventing the US Army from putting all its AI eggs in one basket, with the technology spanning every battlefield system.

Making AI trustworthy is the goal of every developer working on this technology, and so far it hasn’t been proven to be fully deserving of our trust.

On Monday; however, Army AI Task Force (AAITF) Director Brig. Gen. Matthew Easley said that AI “needs to span every battlefield system that we have, from our maneuver systems for our fire control systems to our sustainment systems to our soldier systems to our human resource systems and our enterprise systems.”

Read More: ‘AI needs to span every battle system we have’: US Army AI Task Force director

If the Army is that dedicated to making AI prevalent in every battlefield system, it must believe that it will be able to trust and control the AI, which is a major struggle among developers.

For example, just last month OpenAI announced that it created an AI that broke the simulated laws of physics to win at hide and seek.

Taking what was available in its simulated environment, the AI began to exhibit “unexpected and surprising behaviors,” “ultimately using tools in the environment to break our simulated physics,” according to the team.

We’ve observed AIs discovering complex tool use while competing in a simple game of hide-and-seek. They develop a series of six distinct strategies and counterstrategies, ultimately using tools in the environment to break our simulated physics: https://t.co/2lbOxo19rL

— OpenAI (@OpenAI) September 17, 2019

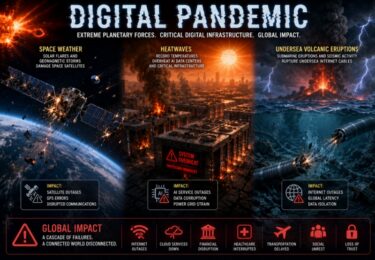

Now imagine if an AI were to exhibit “unexpected and surprising behaviors” within a military setting. What could possibly go wrong?

The AAITF director said, “We see AI as an enabling technology for all Army modernization priorities — from future vertical lift to long range precision fires to soldier lethality,” which makes me question, have they already solved the trust issue with AI and just haven’t told us yet, or is that something they’re still working on?

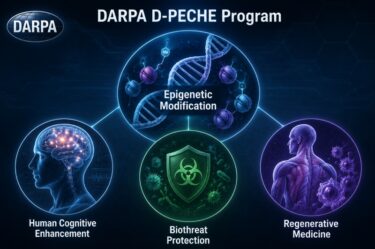

We do have proof that the military has been working on trustworthiness through projects carried out by the Defense Advanced Research Projects Agency (DARPA).

Launched in February DARPA’s Competency-Aware Machine Learning (CAML) Program aims “to develop competence-based trusted machine learning systems whereby an autonomous system can self-assess its task competency and strategy, and express both in a human-understandable form, for a given task under given conditions.”

DARPA acknowledged that “the machines’ lack of awareness of their own competence and their inability to communicate it to their human partners reduces trust and undermines team effectiveness.”

In other words, the military is aware that AI can act unpredictably, and it wants to make sure that Prometheus isn’t let loose in machine learning systems.

Read More: Keeping Prometheus out of machine learning systems

Just as Prometheus was a liberator of humankind by bringing the flame of knowledge to humanity by defying the gods, DARPA wants to make sure that machine learning is trustworthy and doesn’t free itself and spread like an uncontrollable wildfire.

Last year, DARPA announced that it was building an Artificial Intelligence Exploration (AIE) program to turn machines into “collaborative partners” for US national defense.

When DARPA launched the Guaranteeing AI Robustness against Deception (GARD) project, Program Manager Dr. Hava Siegelmann admitted, “We’ve rushed ahead, paying little attention to vulnerabilities inherent in machine learning platforms – particularly in terms of altering, corrupting or deceiving these systems.”

“We must ensure machine learning is safe and incapable of being deceived,” she added.

The Army has gone all-in on AI, essentially putting all of its eggs in one basket in its mission to develop an “AI ecosystem for use within the Army,” which will encompass just about every aspect of battlefield systems.

With the centralization and consolidation of power being placed on AI, surely they’ve figured out a way to make it trustworthy — haven’t they?