Between Adobe Voco and Stanford University’s Face2Face technologies, virtually any person dead or alive can be imitated with vocal and facial manipulation.

There is a great line from the 1993 blockbuster Jurassic Park that comes from Dr. Ian Malcolm, played by Jeff Goldblum, when he confronts the park’s owner on the ethics and dangers of reviving dinosaurs after 65 million years of extinction:

“Your scientists were so preoccupied with whether or not they could, they didn’t stop to think if they should.”

That same sentiment is being expressed when it comes to voice and facial reenactment technologies that now have the power to imitate virtually anyone who has ever been recorded audibly or visually.

Adobe Voco

Last month, Adobe rolled-out Project Voco, which is like the Photoshop for voice. The company’s on-stage demonstration revealed how vocal and speech pattern recognition can be used to make someone appear to say something that in actuality was never said.

During the Adobe MAX 2016 Sneak Peeks presentation in San Diego, co-host Jordan Peele from Comedy Central’s hit show “Key and Peele” had a recording of his voice manipulated, so that instead of his actual statement of “I kissed my dogs and my wife,” Adobe Voco changed it to say, “I kissed Jordan three times.”

While this plays out as a harmless bit of fun, it has raised ethical concerns about the future integrity of journalism.

With about 20 minutes worth of authentic audio from a real person, that is enough data to be manipulated into whatever the programmer wants.

University of Stirling lecturer Dr. Eddy Borges Rey told the BBC, “It seems that Adobe’s programmers were swept along with the excitement of creating something as innovative as a voice manipulator, and ignored the ethical dilemmas brought up by its potential misuse.”

Rey added, “This makes it hard for lawyers, journalists, and other professionals who use digital media as evidence.”

OK, so manipulating audio is one thing; but how easy is it to fake a video?

Face2Face Real-time Facial Manipulation from Stanford University

In a similar manner to Adobe Voco’s voice manipulation, Stanford University is developing technology that can realistically alter any video recording in real-time.

“Our goal is to animate the facial expressions of the target video by a source actor and re-render the manipulated output video in a photo-realistic fashion,” reads the abstract from Stanford.

While the abstract mentions a “source actor,” it is curious that the prestigious university chose to highlight political figures like George W. Bush, Vladimir Putin, and Donald Trump for the video’s facial manipulation demonstration.

It seems that although Stanford is developing the technology, they are aware of the ethical consequences and are showing everyone out in the open how dangerous this technology can be.

According to the Stanford team, “We convincingly re-render the synthesized target face on top of the corresponding video stream such that it seamlessly blends with the real-world illumination.”

However, Stanford is the only research center working on this technology; there are plenty of other companies, including Disney.

There are already rumors circling that major news networks sometimes use green screens in lieu of reporters visiting actual locations to broadcast from, and the same allegations have been raised about politicians using green screens at “rallies” to make it look like more, enthusiastic supporters were in attendance.

Fake News, Green Screen Allegations, and Julian Assange

Perhaps the reason why the Reddit group WhereIsAssange is so adamant that the Wikileaks editor is missing is because of the voice and facial manipulation technologies available. In the case for Assange, many interviews are only available through scratchy audio, and the lower the quality, the harder it is to determine its authenticity.

Read More: Tickets for Q&A with Julian Assange go on sale despite rumors he’s missing

Several users on YouTube have also pointed out that the John Pilger interview with Julian Assange was manipulated due to anomalies in the video where Assange’s collar seems to “lag” and looks doctored.

With regards to the fake news epidemic, the major news networks and media publications have lambasted smaller ones with accusations of spreading fake news, and rightly so in many cases, but the mainstream media has been accused of using technology to alter reports as well.

Read More: Facebook to rely on unnamed third-party fact checkers for fake news

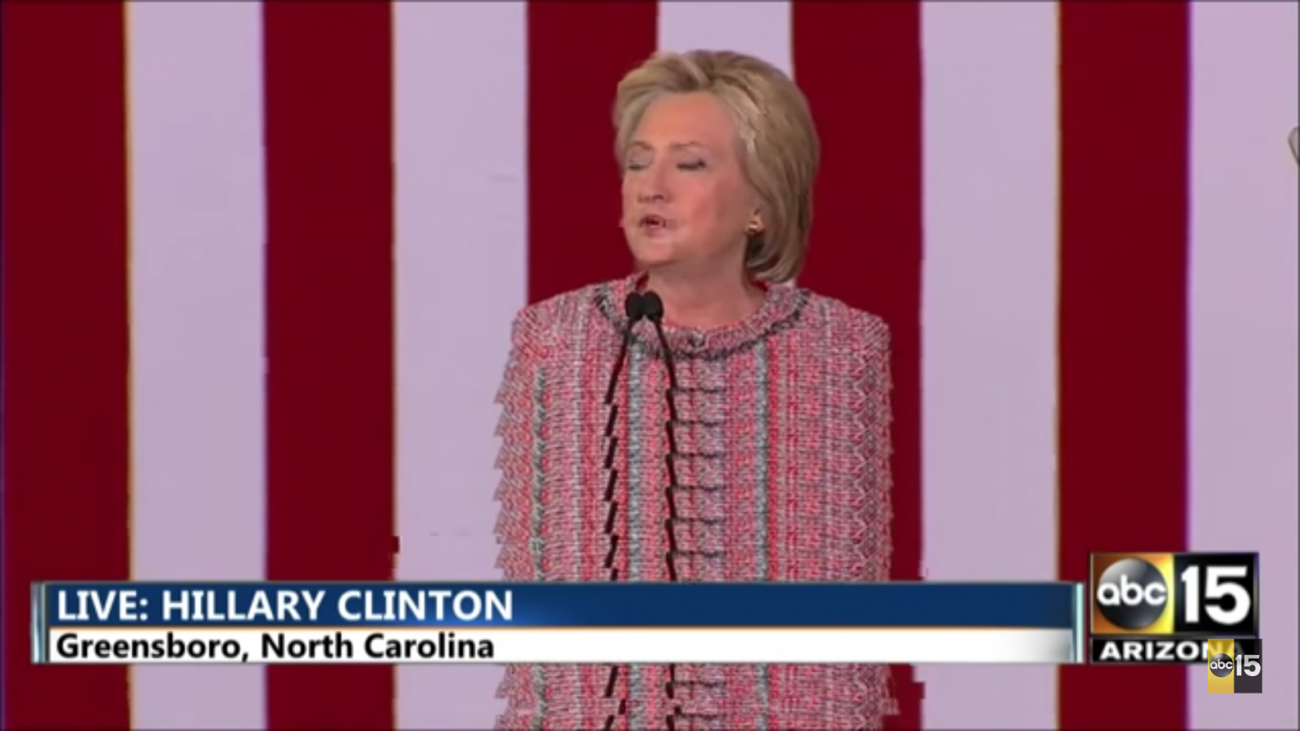

Green screens have been around for a long time and are about as common in newsrooms as autotune is at a pop concert. There have been many accusations that the major news outlets use green screens to report on stories that are allegedly “on-location,” and rumors that Hillary Clinton used this technology at her rallies are still yet to be proven, although highly argued on both sides.

With the technology available, both sides can fake any piece of audio or video to fit their beliefs to their benefits.

Even if the technology is used to make it look like there are more people present at an event, or so that an audience sounds more animated, it would be a terrible threat to democracy because it alters perception.

It’s psychological warfare that has the power to sway elections, especially as they heat-up and undecided voters jump on the bandwagon for whichever side is perceived as winning.

At any rate, between green screens, and vocal and facial manipulation, is there really nothing that can’t be faked?